The Future of Disinformation

and how disinformation kills

Disinformation also kills. The case of Su Chii-cherng, who was a Taiwan diplomat in Japan, is illustrative. A disinformation campaign was launched against him while he was posted to Japan as Taiwan’s representative. Several Taiwan tourists got into trouble while travelling in Japan. When they asked for help, Su Chii-cherng allegedly dismissed them instead of assisting on behalf of the government he represented. The disinformation campaign, shared across social media and replicated by news organisations, narrative claimed that it was the Chinese embassy that ended up helping the Taiwan tourists.

This was a lie. Later, a student in Taiwan was linked to this disinformation campaign. The truth emerged too late - the diplomat killed himself a few days after the disinformation campaign was launched.

Disinformation as a part of military ambition

Dinformation kills possibly right now an even greater number of humans who are part of the military conflict in Ukraine. The Russian government uses this social media and traditional media to persuade its citizens, as well as citizens of other countries, to join Putin’s invasion of Ukraine.

The Russian government uses multiple fabricated narratives in this persuasion – or propaganda - campaign. For example, the Russian government’s propaganda claims that those Ukrainians fighting Russians are not defending their own country, their own families and homes. Instead, they need to fight Ukranians because they support the fascist and Nazi ideologies that allegedly prevail in Ukraine since the Euromaidan revolution of 2014. Another claim used by the Russian government argues that Ukraine is essentially the same country as Russia and, therefore, Russia has the full right to determine what is happening in Ukraine politically and culturally.

Another place where a military conflict is anticipated in the coming future is Taiwan. This perhaps explains why some rankings consider Taiwan one of the places “most affected by disinformation.” Over the last few years, Taiwan has risen sharply in these rankings. Many people link most of the campaigns that the Taiwanese face to China’s growing ambition to submerge the country—a goal that has only expanded since 2020.

The Microsoft threat intelligence team confirms this suspicion. According to its reports, China became the first nation it “observed” to use AI content “in attempts to influence a foreign election.” The inauthentic use of AI during the Taiwan 2024 presidential elections included spreading AI-generated audio, creating AI-generated anchors and AI-enhanced videos. All this audio and video content was used to target some prominent anti-China politicians.

Another example of AI use during the Taiwan 2024 elections includes AI-generated avatars and images circulated on social media platforms mimicking voters and promoting false narratives about political candidates, campaign issues or election integrity.

Those China-influenced elections became so prominent that other countries started considering them as a possible blueprint for how future AI-affected elections across the world will look like. This is important because many leading information experts anticipate that the use of AI will only worsen the state of the global information environment, and developing countries might feel the hit first.

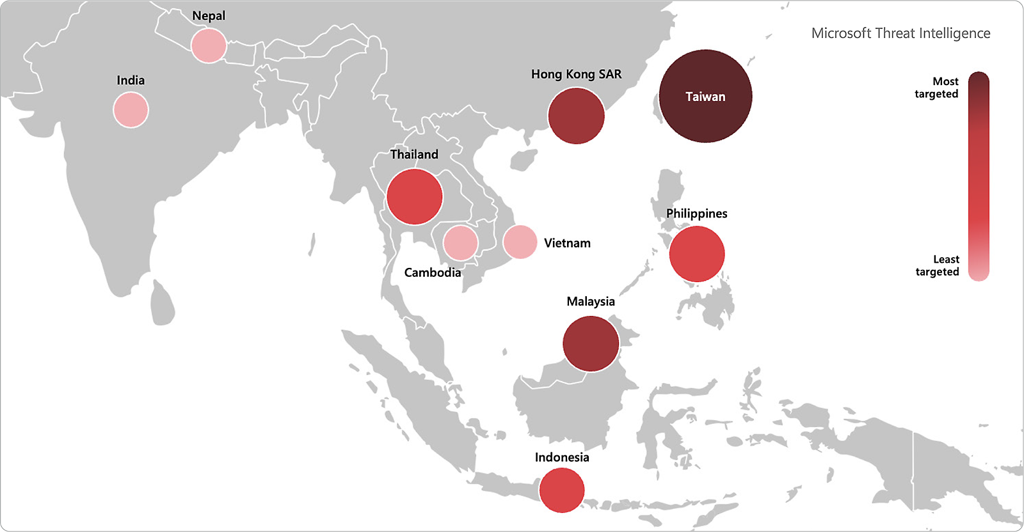

Some other countries involved in a military conflict or located close to it, such as Latvia and Palestine, are also prominent destinations for information campaigns, according to the same ranking. Beyond disinformation campaigns, another ranking focused on cyber threats placed Taiwan as a top target in the Southeast Asia region. This highlights how military activity goes hand in hand with disinformation and cybertroop actions.

Moving forward

It’s hard to estimate the support for the invasion of Ukraine in Russia. Though we definitely know that many people who joined the Russian forces as volunteers and participated in the war are the victims of the Russian state propaganda.

The stories of the Taiwan diplomat and Russian volunteers remind us that propaganda is dangerous and it can kill people, while the stories of Chinese involvement in foreign elections show how AI can be abused in the future.

This highlights the importance of the strong regulation of AI algorithm development by public bodies.

Based on a talk given by Dr Aliaksasndr Herasimenka at the University of Oxford on September 16, 2024.